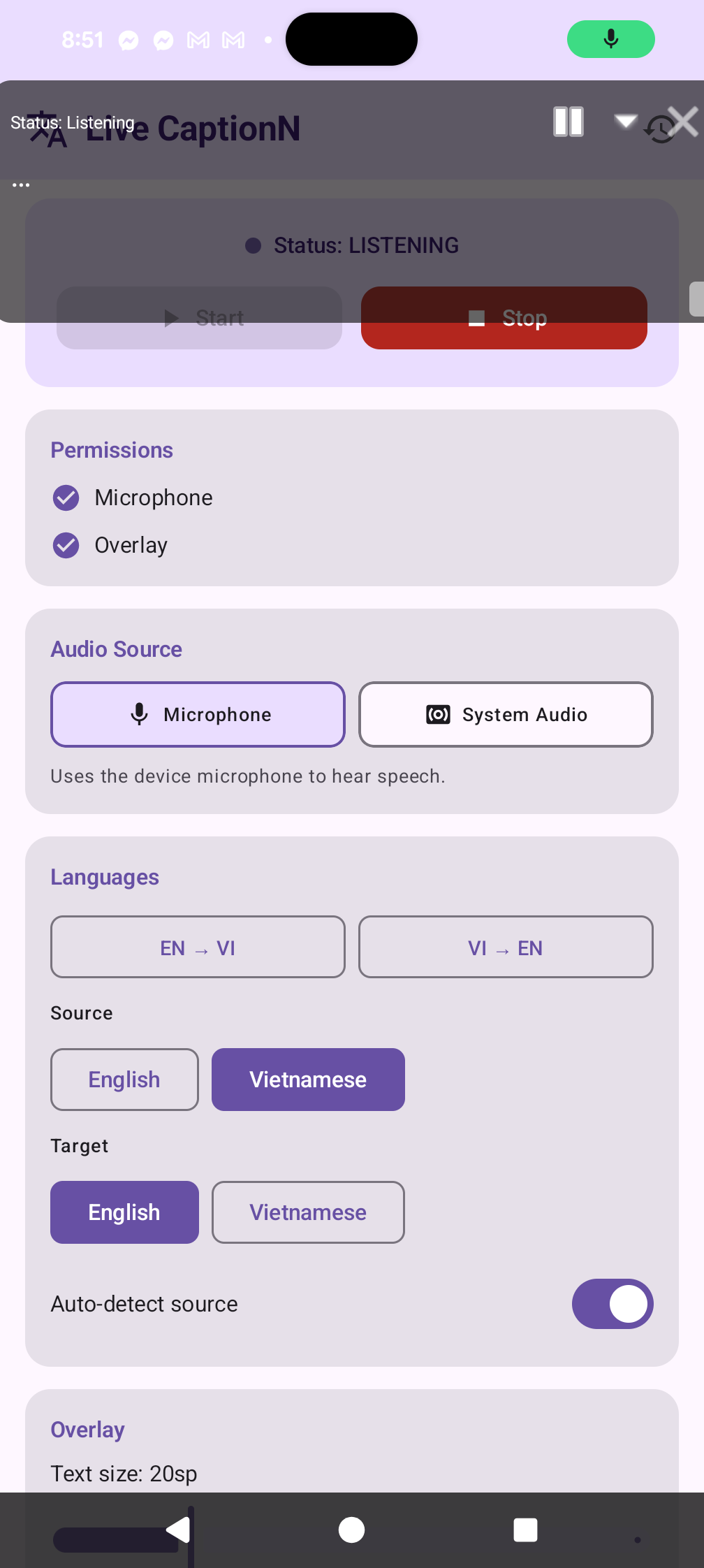

Speech → text: on-device Vosk

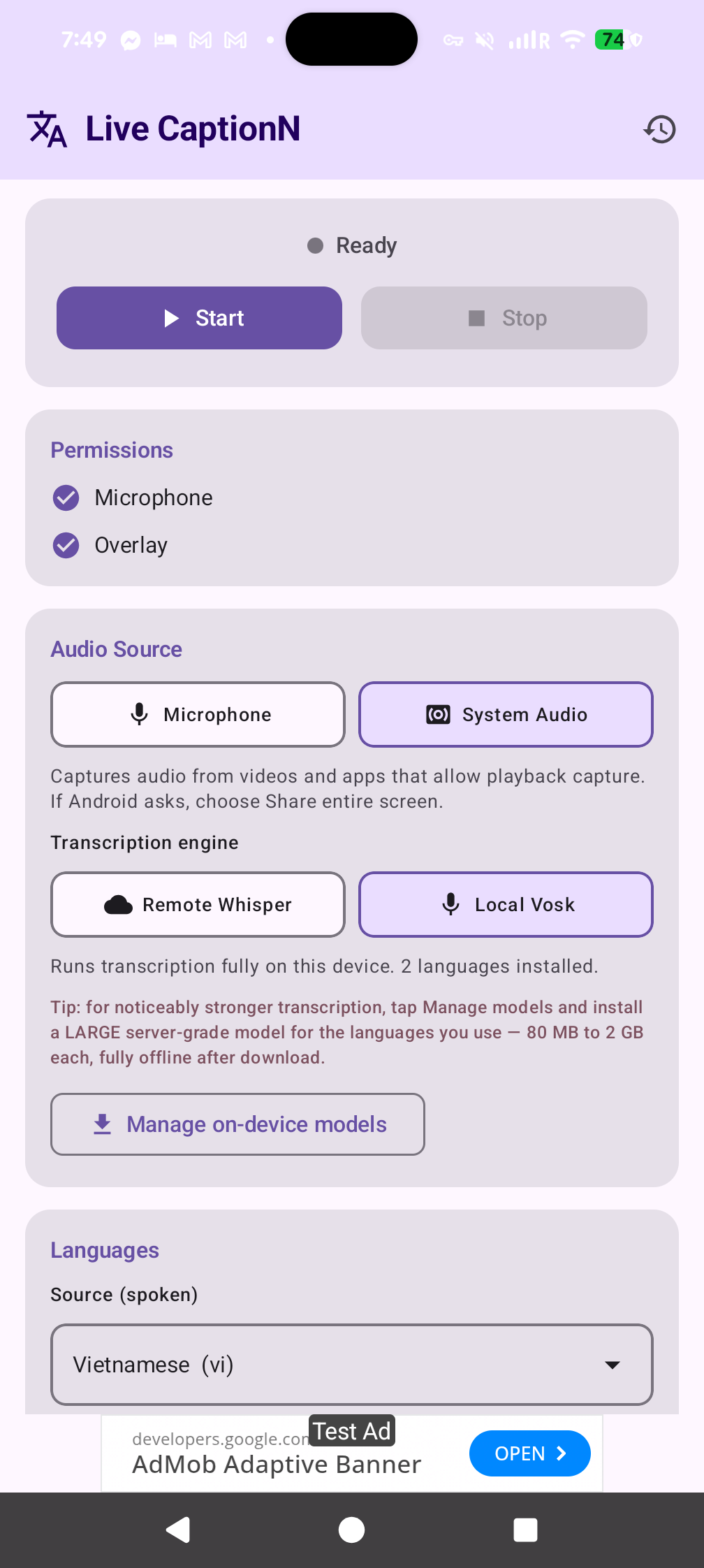

Pick Local Vosk and the source-language picker collapses to just the models installed on this phone. Two small models ship inside the APK (English and Vietnamese) so it works out of the box with zero downloads.

Tap Manage on-device models to grab more. The sheet offers two quality tiers for every supported language:

- Large · server-grade accuracy — full Vosk models with the lowest error rates. ~80 MB to ~2 GB each, downloaded once and cached forever. This is the strongest on-device transcription option.

- Small · fast & light — compact ~40 MB models for quick installs or low-storage phones.

Supported languages include English, Vietnamese, Spanish, French, German, Italian, Portuguese, Dutch, Russian, Ukrainian, Polish, Czech, Turkish, Arabic, Persian, Hindi, Chinese, Japanese, Korean, and Indonesian. Models are fetched from alphacephei.com/vosk/models over HTTPS and unzipped to app-private storage.

Text → text: Google ML Kit (on-device) or LibreTranslate

By default LiveCaptionN uses Google ML Kit's pre-trained Translate models, which run entirely on this device. ~59 supported languages, ~30 MB per language pair (downloaded the first time you use the pair, then cached offline forever). No server, no account, no telemetry.

If you want more languages or you already run your own infrastructure, switch to the LibreTranslate backend in settings and point the app at any LibreTranslate-compatible server. On URL change the app calls GET /languages and repopulates the pickers with whatever packages the server has.

Want to add more LibreTranslate languages? SSH into the host and install extra Argos packages:

argospm update

argospm install translate-en_ja translate-en_ko translate-en_fa

# …then restart LibreTranslate

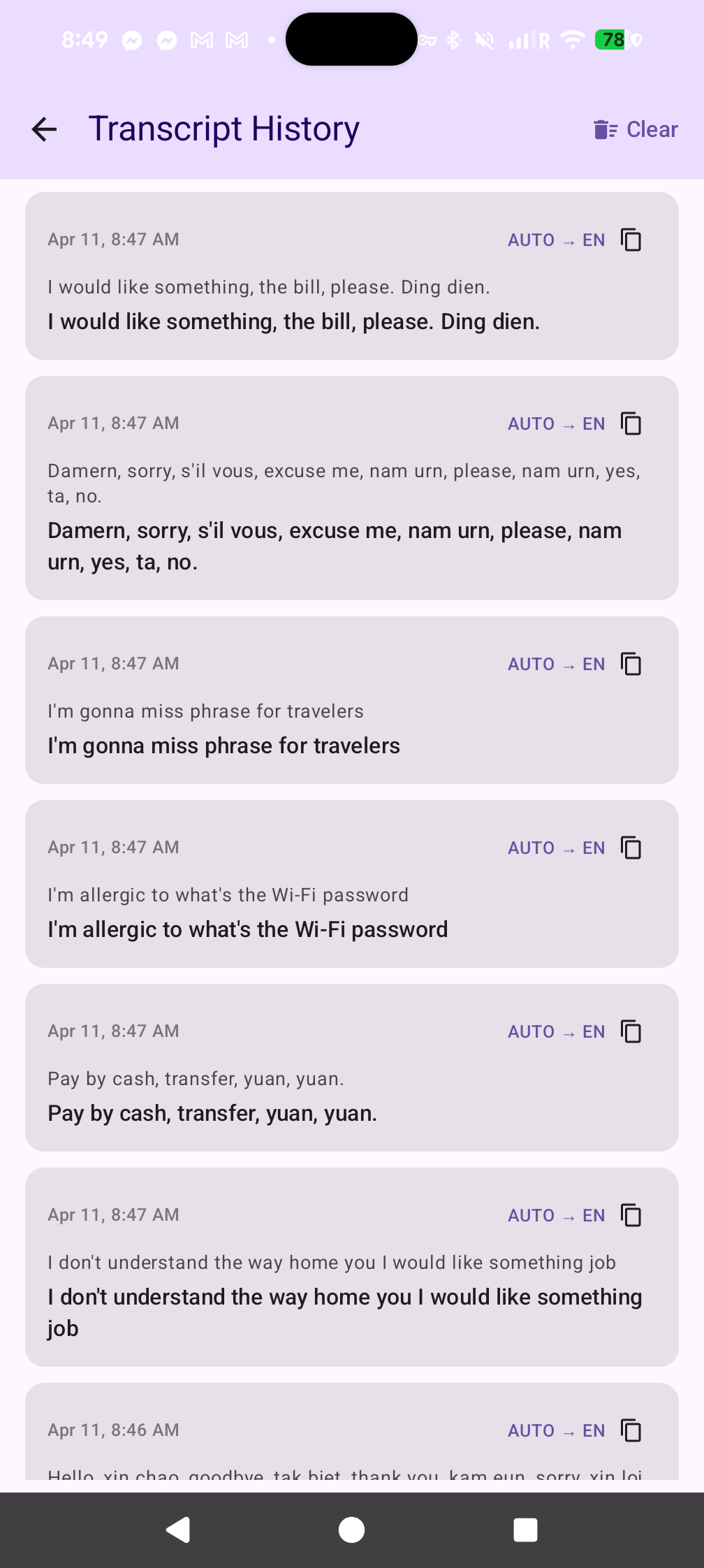

If the source and target language match (English speech → English captions), no translation call is made regardless of backend.